TL;DR: AI agents can regenerate code in hours. They can't regenerate the product decisions you made over months. If those decisions are captured in a behavior spec, "start from scratch" becomes a real option — not a catastrophe. Code is disposable. Decisions aren't.

There's a comment that's been stuck in my head since I published my last article. A developer wrote:

"I'm rebuilding my entire stack from scratch. Got too deep in not knowing what the AI was doing / why."

He's not alone. I've talked to founders who are quietly doing the same thing. The codebase works, but they can't explain it. They shipped fast with AI coding agents, and now the thing they built has outgrown their ability to hold it in their head.

The old response to this was: don't rebuild. Rewrites are expensive. You'll lose institutional knowledge. You'll make the same mistakes again. That advice made sense when rewriting meant months of manual work.

It doesn't apply anymore.

Rebuilding is cheap now

AI coding agents — Claude Code, Codex, Cursor — can scaffold a project, implement features, and wire up integrations in hours. What used to be a three-month rewrite is now a weekend. The code generation cost has collapsed.

But here's what hasn't changed: the product decisions are still expensive.

Why is the grace period 14 days? Why does the auth flow force email verification before first login? Why does the export fail silently on empty results instead of returning an error? Why is the tax ID field deliberately nullable?

Those decisions were made over months of context — user feedback, compliance reviews, edge cases you discovered in production, conversations with your team. They're not in the code. The code just implements them. The reasoning lives in your head, in old Slack threads, in closed tickets nobody will ever read again.

When you rebuild from scratch, the code regenerates easily. The decisions don't.

What actually gets lost in a rebuild

I've been through this. You start fresh, the new codebase is clean, and then three weeks in you realize:

- You rebuilt the billing module but forgot the grace period was a compliance requirement, not a product choice

- You simplified the auth flow and removed the email verification step that existed to prevent account abuse during beta

- You made the retry logic configurable per plan, but the original was deliberately fixed because support couldn't handle the complexity of per-plan grace periods

Each of these is a product decision that took real effort to reach the first time. Rediscovering them through production incidents is the most expensive way to learn.

The irony: you rebuilt because the codebase was incomprehensible. But the new codebase will become incomprehensible too — unless you capture the reasoning this time.

Why "just scan the old repo" doesn't work

The obvious shortcut is: have the AI agent scan the old codebase and use that knowledge to build the new one. I've tried this. It fails in two ways.

The agent carries forward too much. It scans everything — the hacks, the workarounds, the wrong architecture decisions, the tech debt you were trying to escape. The new project inherits the old project's baggage. You wanted fresh. You got a ghost.

The agent misses what actually matters. The important decisions aren't in the code. They're in your head. The agent carries forward implementation details but misses product truth. It replicates how you built it, not why you built it that way.

And there's a third problem most people don't think about: nobody knows what the agent assumed the first time. During the original build, the agent made implicit decisions — default behaviors, gap-filling, interpretations. Those assumptions are baked into the code, but nobody validated them. You don't even know they're there until something breaks in production.

What you actually need isn't a full repo scan. It's a filter — something that separates the product decisions worth keeping from the implementation details worth discarding, and surfaces the implicit assumptions you never reviewed.

The rewrite test

There's a test I've started using for any piece of product knowledge: would this rule still need to be true if you rewrote the module in a different language?

- "Line 42 checks

daysSince > 14" → No. That's implementation. It changes with every rewrite. - "Grace period is exactly 14 days, not configurable per plan" → Yes. That's a product decision. It survives any rewrite.

- "Use the

handleFailedPayment()function" → No. Implementation detail. - "No data deletion occurs during grace period" → Yes. Product promise.

Anything that passes the rewrite test is worth capturing before you rebuild. Everything else the AI agent can rediscover from the new code.

Making "start fresh" a real option

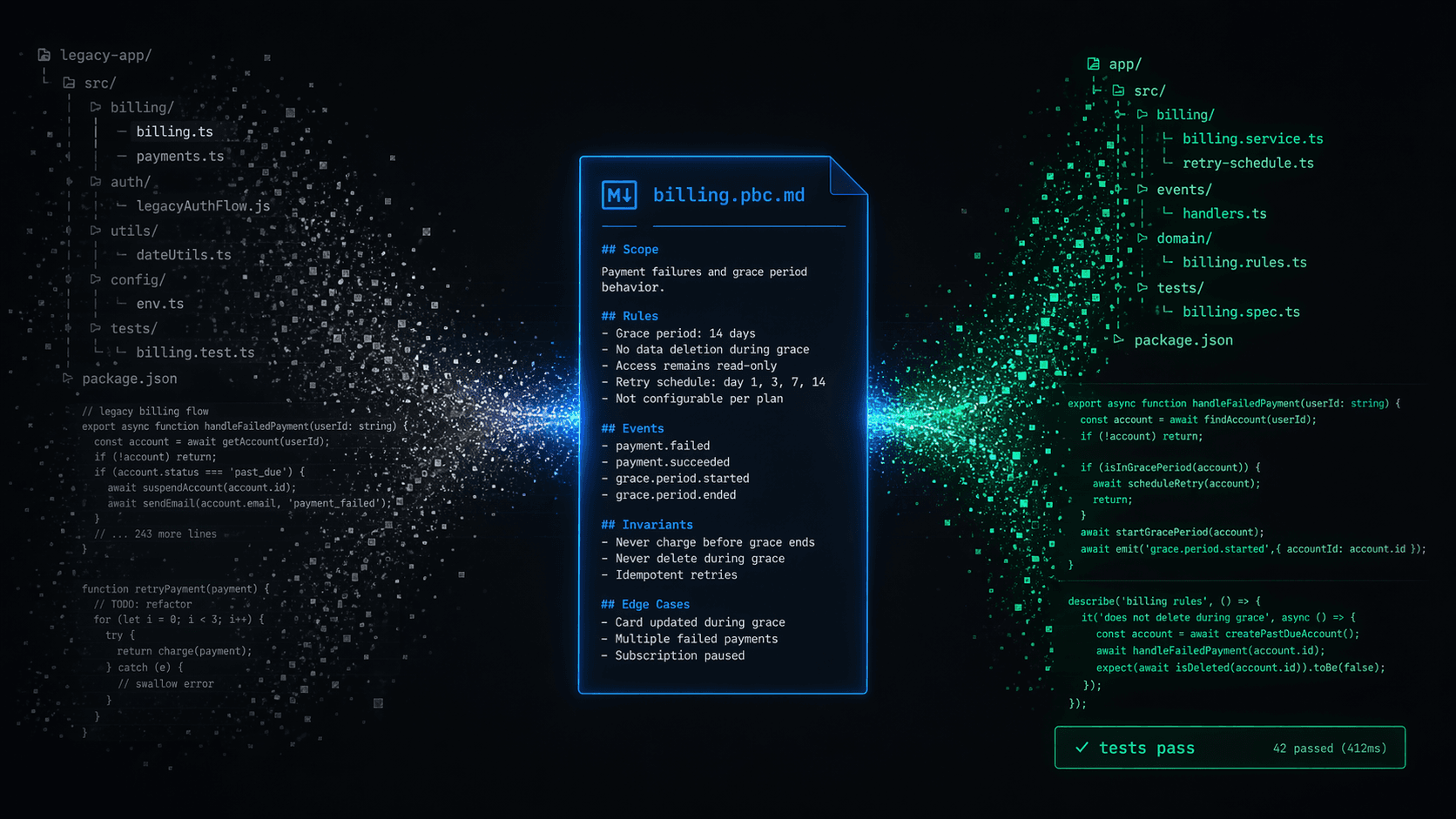

The pattern I've landed on is simple: before you rebuild, extract the product decisions into a behavior spec — a lightweight markdown file that captures what your product promises, not how the code works.

For billing, that looks like:

## Grace period after failed payment

### When

A subscription payment fails

### Then

- System enters a 14-day grace period

- User retains full access during grace period

- Daily retry attempts against payment method

- On day 14: downgrade to free tier

### Invariants

- Grace period is exactly 14 days — not configurable per plan

- No data deletion occurs during grace period

- Grace period cannot be extended manually by support

### Edge cases

- If user upgrades during grace period: new payment attempt immediately

- If payment method removed during grace: grace period continues, retry stops

That file is 20 lines. It takes 15 minutes to write. And it carries forward everything that matters about your billing grace period — regardless of whether the next implementation is TypeScript, Go, or something an AI agent writes from a prompt.

Surfacing what the agent assumed

Writing behavior specs from scratch works. But there's a faster path: have a tool scan your existing codebase, form hypotheses about the product behaviors it finds, and sort them with you into "yes, that's intentional," "not sure yet," and "that's wrong."

The interesting part isn't cataloguing what you already know. It's what the process surfaces that you didn't know. During the original build, the AI made decisions nobody reviewed — default behaviors, gap-filling, implicit assumptions that looked reasonable but were never validated. Those assumptions are invisible in the code. Reviewing hypotheses side-by-side makes them visible for the first time.

The result is a clean set of behavior specs — only the product decisions that matter, with everything else filtered out. No implementation baggage. No implicit assumptions carrying forward unchecked. Just the portable truth you need to start fresh.

Portable product truth

This is the part that changed my thinking about rebuilds.

If your product decisions are captured in behavior specs, they're portable across any implementation. You can:

- Start a new project and point the AI agent at your behavior specs — it builds with all constraints intact

- Try a completely different architecture — the product truth travels with you

- Let an agent regenerate entire modules — the spec tells it what must remain true

- Hand the project to a new developer (or a new agent) — they know what the product promises without reading every line of code

The behavior spec doesn't describe the code. It describes the decisions that the code must honor. That's why it survives rewrites — it's at the right level of abstraction.

The real unlock

Here's what I didn't expect: once your product decisions are explicit, rebuilding becomes a feature, not a failure.

Your codebase got messy? Start fresh — the decisions carry forward.

Want to try a different framework? Go for it — the product truth is framework-agnostic.

AI-generated code grew beyond what you can hold in your head? That's fine — the behavior spec stays compact and readable. It's the summary of everything that matters.

The constraint used to be: "rebuilding is too expensive because you'll lose what you know." With behavior specs, the constraint is gone. The code is the cheap part. The decisions are the expensive part. And the decisions are captured.

Start with what would hurt most

You don't need to spec your entire product before rebuilding. Start with the module where getting it wrong would hurt the most. Usually billing. Sometimes auth. Occasionally entitlements.

Write down the behaviors, invariants, and edge cases. 15 minutes per module. That's your portable product truth.

Then rebuild with confidence — or don't rebuild at all. Either way, the decisions are no longer trapped in your head.

The PBC spec is open source at github.com/stewie-sh/pbc-spec. You can browse example contracts in the PBC viewer.

If your product decisions live only in your memory, they're one rebuild away from being lost. Stewie is the workspace that makes them explicit.